Get to know the researchers

Gain an in-depth understanding of their research and how it connects to our UN SDG commitments.

Project Presentations

This year’s research conference includes project presentations of undergraduate students and their research experiences. Hear firsthand as these students share their experiences and discuss the various research opportunities available to undergraduates. Explore a variety of topics inspired by the UN’s Sustainable Development Goals (SDGs). Hear about recent discoveries, cutting-edge research, and emerging technologies in fields such as machine learning algorithms, bioengineering, RSO satellite models, water ice mapping on Mars, and more.

Researchers

-

UN SDG 3: Good Health & Well-being

Bilqees Yousuf

Program: LURA

Supervisor: Peter Park

Abstract: Development of a Post-Crash Care Dashboard

Vision Zero is a road safety philosophy that has been implemented into transportation planning across the world. This philosophy aims to diminish vehicle-related fatalities and injuries by strategic planning of various road safety elements. These elements include; Safe Roads, Safe Vehicles, Safe Speeds and Safe People. Presently, Canada's Vision Zero documentation does not address one key element as a part of the road safety strategic plan. This element is the Post-Crash Care (e.g., Emergency Medical Services; EMS) that dispatches first responders to help victims of vehicle crashes by transporting them to emergency medical care centres. The fire fighters also act as first responders as they dispatch fire engines to vehicle crash locations from local fire stations to ensure the fastest response time. The EMS vehicle response time is a critical value that is continuously monitored to determine EMS vehicle response capabilities. This data is used to assess the emergency response capabilities of the existing infrastructure and it can affect, for instance, the need for the development of a new fire station. The focus of this project is to develop an interactive Post-Crash Care Dashboard using ArcGIS Online. The dashboard is developed using the City of Vaughan’s fire incident data that contains dispatching information involved with vehicle crashes and extrications. The dashboard includes elements such as incident maps, and various charts that display incident location, alarm type, time of the incident, travel time zones, and EMS response time percentile statistics. This dashboard is developed to enable visualization of the incident data based on filters applied to the elements of the dashboard in response to user input.

Dhyan Thakkar

Program: LURA

Supervisor: Satinder K. Brar

Abstract: Toxicity Studies of Imipenem & Imipenem Copper Complex on Two Common Wastewater Bacterial Species

The over-consumption of antibiotics over the years has led to their high detection rates in wastewater systems. Further, with co-occurrence of metals and antibiotic residues in wastewater treatment plants (WWTPs), there is a strong possibility of formation of antibiotic metal complexes (AMCs), which are usually more stable and more toxic to microbial communities. Imipenem is a broad-spectrum, relatively new antibiotic, and is used as a last resort for patients with severe bacterial infections. Imipenem can interact with metals, such as copper (II), and affect the microbial communities in these aquatic environments. In this regard, this study compares the effect of imipenem and imipenem- Cu (II) complexes in two bacterial species E. Coli and B. Subtilis. E. coli and B. subtilis are gram-negative and gram-positive bacteria respectively and are commonly detected in WWTPs. Copper was selected because of its high occurrence in WWTPs, moreover, copper is known for its antibacterial properties. The present study determines the minimum inhibitory concentrations (MIC) for Imipenem and Imipenem- Cu (II) (1:1 and 1:2) and compares the colony forming unit (CFU), as a measure of its toxicity. We expect to find the CFU count of Imipenem- Cu (II) to be much less than the CFU count for Imipenem because AMCs tend to be more toxic to bacterial communities. Further, the MIC values will provide insight into how much Imipenem is required to have a severe impact on bacterial species. This study aims to provide clarity on the issue of Imipenem pollution in wastewater systems, and its effects on microbial studies.

Joaquin Ramirez-Medina

Program: LURA

Supervisor: Pouya Rezai

Abstract: In-vivo Microfluidic Assays to Investigate Cardiac Functional Impairments and Developmental Abnormalities Associated with SARS-COV-2 ORF3a Protein Expression in Drosophila Larval Heart

In-vivo imaging of Drosophila embryos and larvae requires complete immobilization and orientation for optical accessibility to different organs and cells. Here, a previously developed microfluidic device and a heartbeat quantification software were used to study the effect of SARS-COV2 ORF3a protein expression in the heart of Drosophila larvae. Using the GAL4-UAS expression system, the ORF3a protein was specifically overexpressed in the heart of 3rd instar Drosophila larvae. Larvae were loaded, oriented, and immobilized inside the microfluidic device using loading and orientation glass capillaries, and immobilization microchannels, respectively. Then, heart activity was video-recorded and the heartbeat parameters were measured using a MATLAB-based software. To orient Drosophila embryos, we proposed another microfluidic device capable of out-of-plane rotational manipulation. We trapped bubbles within the microchannel of the device, employing a piezoelectric transducer generating controlled vibrations creating vortices about the bubbles. At the larval stage, the heart-specific overexpression of ORF3a in larvae increased the heart rate (HR) and arrhythmicity (AR) by 17% and 24%, respectively. For embryos, the optimized frequency and peak-to-peak voltages for out-of-plane rotation were found to be at 5 kHz and within 1 to 3.5 V, respectively. Furthermore, for a stable bubble size within the microchannel, we determined that the required internal pressure of the microchannel was ~4.9 kPa, thereby preventing rectified diffusion. The heart-specific overexpression of ORF3a protein significantly increased the HR and AR of Drosophila larvae. Moreover, we have developed an acoustofluidic rotational manipulation technique for high-throughput imaging of embryos.

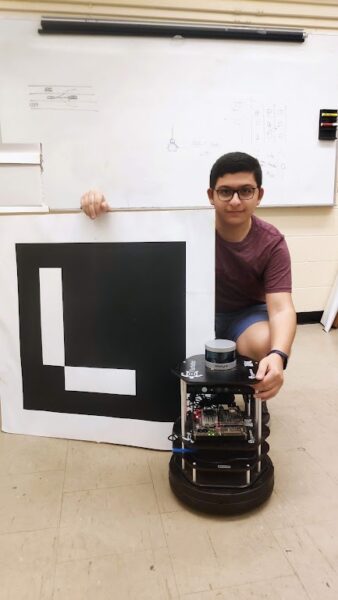

Mohammadreza Kazemi

Program: LURA

Supervisor: Hossein Kassiri

Abstract: Software Development for a Wearable Brain EEG Monitoring Device

Electroencephalography (EEG) is known as the best non-invasive method for high-resolution real-time monitoring of brain neural activities. The standard way of conducting EEG recording, however, requires a trained technician to conduct the experiment which involves patient preparation, electrode placement, equipment setup, data collection, and interpretation. Motivated by this, several wireless wearable EEG recording headsets have been developed over the past few years, aiming to provide a fast, low-cost, and medically-relevant alternative to the existing technology, thus achieving long-term ambulatory EEG recording. Successful development of such technology has a significant positive impact on many diagnostics, treatment, rehabilitation, and communication applications. We have created a wearable wireless device in the Integrated Circuits and Systems Lab that can incorporate a large number of recording channels and is intended to be used as a low-cost long-term brain monitoring solution. A patented algorithm in the device enables the early detection of epilepsy seizures. Designing, creating, and testing Windows-based software (or an android app) that communicates with this wearable technology is the major goal of this project. To gather, store, and display the data received from the wearable device, software or an app is needed.

Nina Yanin

Program: USRA

Supervisor: Manos Papagelis

Profile: Nina is a 5th-year software engineering student, She has worked on a risk-based trip recommender model over the previous summer as part of the NSERC USRA 2021 program under Dr. Papagelis which will be continued this summer.

Abstract: Optimal Risk-aware Point-of-Interest (POI) Recommendations during Epidemics

Human mobility plays an essential part in the advancement of a pandemic. As such, travel restrictions and quarantines were used to mitigate it, despite causing social and economic turmoil. Previous studies evaluated recommendation models as tools to replace lock-downs, support decision-making and mitigate infections by recommending the least risky trips and points of interest (POIs). This research aims to expand on these models by using mobility-based risk-aware recommendations to minimize the global risk of infection at POIs. The problem is formulated as a many-to-many assignment linear integer programming problem, where many users can be directed to many POIs. The model receives queries over a short span of time, which consist of sources, search radii and times of departure, and returns sorted top-k POIs for each user, such that the aggregated risk at all POIs is minimized. More specifically, it uses a static risk approximator based on spatiotemporal mobility data to detect expected occupancies at POIs over time. Once the top-k sorted POIs are selected, they are treated with exponentially decaying likelihoods of being chosen by the user. The model takes the likelihoods and treats them as dynamic risks that are added to the static risks of their respective POIs for a median dwell-time period. The model aims to find the combination that minimize these added risks. An extensive evaluation was performed using synthetic queries over an hour in the Toronto region, where the top-k sorted POIs were evaluated against k random selections. Preliminary results demonstrate that the model outperformed sensible baselines. Overall, this data-driven model can be a vital tool in mitigating the risk of infection and encourage responsible behaviours in the community.Read More

Roozbeh Alishahian, Sofia Graci, and Youssef Demashkieh

Program: LURA, LURA, and RAY

Supervisor: Aleksander Czekanski

Profile: Sofia is entering her fourth year of Mechanical Engineering at Lassonde and she is interested in studying the effects of different hydrogel compositions on characteristics of interest such as final print edge quality.

Abstract: Development of Tools for Bioprinting Fidelity Assessment and 3D Printing Bioinks with Internal Structures

Bioinks (biocompatible hydrogels) are used in 3D bioprinting as the base material to produce living tissue. They require certain properties to be suitable for such applications. Biocompatibility, printability, repeatability, ability to support cells and consistency across the print are some of the main criteria bioinks must have to be useful for the fabrication of tissue. Using a mixture of Gelatin (type A), Sodium Alginate and Cellulose Nanofibres (CNF), we synthesized various solutions for bioprinting and then tested the properties of the structures created to gain insights into finding the optimal mixture for bio structure printing.

In this project, we created a series of tools and techniques to assess and quantify the main properties of the printed structures. The mixtures created underwent a FTIR Analysis (Fourier Transform Infrared) to determine the chemical makeup of each mixture alongside a particle injection and distribution study to examine the possible live cell distribution upon the print. Simple linear prints and square structures were photographed under a microscope and then studied using continuous edge detection and other image processing to assess the fidelity of the print. The final complex structures were studied using a Hybrid Rheometer and X-ray Microscopes to determine the mechanical properties and the fidelity of the internal structure. The optimal mixture and the assessment tools created during this research provide other researchers with access to a reliable mixture for specified applications and general tools to quantify the properties of future mixtures for other types of bioprinting -

UN SDG 4: Quality Education

Audrey Garcia

Program: LURA

Supervisor: Andrew Maxwell

Profile: Audrey is a 4th year student majoring in Economics under the Faculty of Liberal Arts and Professional Studies. Audrey is interested in entrepreneurship, startup culture, design thinking, and User Experience Design.

Abstract: Improving UNHack Design Thinking Process

UNHack is an hackathon program, an experiential learning activity, that runs for 2-3 days annually, typically over a weekend. Students leverage the design thinking mindset through series of design sprint stages to find a solution to a wicked problems that are framed in form of "How Might We" prompts. Each wicked problem underlie one or more than 1 United Nations Sustainability Goal. During the COVID-19 pandemic, UNHack operated in an online setting where students utilized digital tools such as Discord to communicate with mentors, staff, and team members and staff-designed Miro templates to assist them in the design thinking process. As academic institutions transition to post-pandemic period, UNHack is expected to operate either in a hybrid or fully in-person setting. UNHack goes through multiple stages: (1) Opportunity Identification / Discovery Research (2) Problem Definition (3) Ideation (4) Design / Solution Development (5) Business & Market Validation.

Alexandro Salvatore Di Nunzio, Damith Tennakoon

Program: Research Assistants

Supervisor: Mojgan Jadidi

Profiles: Alexandro is a research assistant working on the Virtual Sandbox Development Project under the supervision of Dr. Jadidi. He recently graduated from the Lassonde School of Engineering with a Bachelor of Arts in Digital Media, with a Specialized Honours in Game Arts. He also has a Bachelor of Science in Psychology, which has proven to be pertinent when it comes to the development of systems concerning UI/UX Design and Human-Computer Interaction.

Damith is an undergraduate research assistant for the XR Sandbox Development project at GeoVA Lab. He has a passion to devise, develop and apply high-tech in engineering education. In a world that is constantly evolving, he believes that through the application of physics and engineering, we can steer the spear of innovation towards sustainability and technological advancements.

Abstract: The Augmented and Virtual Reality Sandbox - Teaching Complex Systems through Immersive Environments

In the past, complex Earth systems concepts have always been delivered through pencil and paper. Students are expected to comprehend the 3-dimensional world by reading 2D paper/digital topographic maps, making it difficult to grasp Earth systems concepts. To overcome this, we have adopted the Augmented Reality (AR) Sandbox, initially developed by UC Davis, that uses a depth sensor to create a 2-dimensional topographic map of its sandy terrain, which is then projected, in real-time, onto the sand. This system is well designed to visualize 3D terrain surfaces, however, it cannot display/compute geological, hydrological and man-made structure concepts and computations. Due to these obstacles, we have been developing a virtual application of the AR Sandbox, called the Virtual Sandbox. The Virtual Sandbox is a web-based computer application, developed using the Unity Game Engine, that allows users to perform complex surface terrain model analysis. Users are provided with tools that enable them to measure the horizontal distance between two points, visualize a planar structure with the 3-point problem approach, and generate rivers using a fluid simulation. Further, our team has also begun the development of a fully immersive virtual reality application, called the Virtual Reality (VR) Sandbox, using the Meta Quest 2 VR headset. This application enables students to take virtual tours of well-known locations on Earth such as the Grand Canyon, Swiss National Park, etc. There are tools such as a river generation tool to visualize how rivers once flowed on varying terrains. We are extending this technology for further engineering topics such as circuit board prototyping, drone assembly, and satellite design aimed at providing immersive lab experiences for Lassonde students.

Sahel Ghazanfari

Program: Research Assistant

Supervisor: Andrew Maxwell

Abstract: Creating the Global Classroom: Enhancing the Commercialization of University Research

Creating the Global Classroom: Enhancing the Commercialization of University Research Designing a Master course for students where they come up with their own projects to adopt new technologies and implement them in research files at the university. It is developed to offer a formal approach and novel frameworks that allows the student to comprehend the complications around improving the value of their technologies, overcoming barriers to adoption, and recognizing alternate strategies for market success.

Suha Siddiqui

Program: USRA

Supervisor: Maleknaz Nayebi

Abstract: Expertise Aware Content Customization

Readers’ expertise directly impacts their understanding of content, yet everyone receives the same manual for products. In software systems, APIs are the interfaces that offer services to other systems and can connect software systems together. With the adaptation of machine learning in recent years across different industries, many machine learning libraries are written and used by practitioners from different sectors to bring intelligence into their domain of practice. Yet, these documents are written by software professionals and are highly technical. Thus, there is an abundance of tutorials, courses, and educational resources and the Q/A platforms are full of redundant mistakes reported by the users. In this study, we focused on the popular ML library, TensorFlow, and aimed at answering the question “What model and tool can translate API documentation based on user expertise in Machine learning and software engineering?”. We first developed a method called “ELSU” to identify the expertise of the user and then curate the content to their expertise on multiple dimensions of the readability and content. ELSU uses Docstring (a descriptive string literal serving a similar purpose as a descriptive comment) to attain TensorFlow API descriptions. Then, a machine learning process is performed using the existing tutorials to train the model and predict the level of documentation customized and strung together with external resources. Eventually, the mechanism can be applied to various facets of software not exclusive to TensorFlow. Furthermore, the developed project can expand to other contexts to help develop resilient infrastructure and educational access (UN Sustainability Goal 9.5 and 4.6 respectively). The project exists on a website http://tf-doc.suhasiddiqui.net/.Read More

Yixi Zhao

Program: LURA

Supervisor: Alvine Boaye Belle

Abstract: Assessing the Systematicity of Software Engineering Reviews Self-Identifying as Systematic

Through Systematic reviews, people can synthesize the state of knowledge corresponding to the research question and understand the associations among exposures and outcomes. Normally, explicit, reproducible and systematic methods are used for decreasing the potential bias which may occur during conducting a review. Moreover, if a systematic review is conducted properly, it will come out reliable outcome, then conclusions can be extracted, and decision can be indicated. Therefore, systematic reviews become popular and crucial in evidence-based health care and more area including psychology, education, sociology, etc. However, most of systematic reviews display with low methodological and quality. In this case, it is important to follow the reporting guidelines to guarantee the review’s transparent, complete, trustworthy, reproducible and unbiased, etc. Nevertheless, systematic reviews mostly do not abide by the existing guidelines that probably lower the quality of reviews and result negative effect including lack methodological rigor, yield low-credible findings and may mislead decision-makers. Our research is trying to explore a quantifying measurement in Computer Engineering area for users to evaluate the degree of the review’s systematic and foster the objective and consistent comparison among reviews meanwhile. We evaluated 151 reviews published in the software engineering field from Google Scholar, IEEE and ACM, which showed that a relative suboptimal systematicity i.e. methodological rigor and reporting quality. -

UN SDG 6: Clean Water & Sanitation

Zehra Fatima

Program: Mitacs Globalink

Supervisor: Rashid Bashir

Profile: Zehra is a third-year undergraduate student of Civil Engineering at Aligarh Muslim University, Aligarh. As a research intern under the Mitacs Globalink Research Internship (GRI) program, Zehra is excited to explore groundwater hydrology in the context of climate change.

Abstract: Modeling the Effect of Climate Change on Groundwater Recharge

Groundwater is an important ecological resource. It is relied upon by many as a source of fresh water for drinking, irrigation and industrial purposes. About 30% of Canadians rely on groundwater for domestic use. Groundwater recharge is the flow of water through the vadose zone into the underlying aquifer. Recharge rates vary from location to location as recharge depends on the soil type as well as the frequency, duration, and intensity of precipitation. The climate is constantly changing and therefore it is crucial to estimate how groundwater recharge rates will be altered in response to climate change.

This research effort aims to identify the climatic factors that affect groundwater recharge rates; and to quantify groundwater recharge rates for the future. To accomplish this, historical and future climate data for various locations across Ontario were compiled over a total of 120 years, using the available climate data records as well as four different General Circulation Models (GCMs). Groundwater recharge was simulated using a variably saturated flow model with a soil-atmosphere boundary condition. The simulations were carried out using HYDRUS-1D. The output data was then processed to obtain water balance at the ground surface and the amount of recharge. The results of this research suggest that the impact on groundwater recharge due to climate change will be location-specific and is a function of the hydraulic properties of the vadose zone.

Ghuncha Fatma

Program: Mitacs Globalink

Supervisor: Cuiying Jian

Profile: Ghuncha is a Mechanical Engineering undergraduate student whose research interests involve Machine Learning and Materials Science. She aims to use her learning to develop novel solutions to crucial problems that can have a global impact.

Abstract: Machine Learning Guided Filter Design to Alleviate Heavy Metal Pollution

According to a recent UN study, 80% of wastewater flows back into the ecosystem without being treated. Although acceptable in low quantities, chemical contaminants such as heavy metals present in wastewater may find their way into the food chain and cause chronic or carcinogenic diseases. Thus it is crucial to treat wastewater before releasing it into the environment. To solve this, we propose the use of graphene-based filters. As opposed to conventional membrane filters, which suffer flux decline over time and have a low tolerance to acid/alkaline conditions, graphene-based filters have demonstrated tremendous robustness and high fluxes in wastewater remedies. The aim is to accelerate the material design of graphene-based filters through a combined approach of machine learning (ML) with classical molecular dynamics (MD). A state-of-the-art ML algorithm, GDyNet (based on graph-neural networks), is deployed to automate data mining and detect patterns that may otherwise go unnoticed by human eyes due to vast data produced by MD simulations. This is achieved by converting the trajectory data of metal ions in water to a graph with nodes and edges and updating it as the system evolves. This graph is then fed to the GDyNet model to learn motion and predict the probability of different trajectory patterns of metal ions in the MD simulations. We can thus identify the evolution of ions across filter pores during the filtration process. Thereby it sets a guideline for the optimal design of graphene-based filters by modifying material structures, focusing primarily on functional groups attached to the filter pores. Upon application, we anticipate filters delivering high performance with low energy inputs and considerably reduced maintenance costs.

John Li Chen Hok

Program: LURA

Supervisor: Stephanie Gora

Abstract: Lead in Decentralised Drinking Water System

Lead is a highly toxic heavy metal that can cause adverse neurological effects in children and renal dysfunction in adults. Decentralised drinking water systems (DDWS) include any systems that are not supplied by a centralised municipal water distribution network. Lead has a maximum acceptable concentration (MAC) of 5 ppb (µg/L) as defined by Health Canada, but should be kept as low as reasonably achievable (ALARA) as there are no safe exposure levels. Many sampling methods that consider variables such as overnight stagnation or water usage patterns have been developed to assess lead exposure levels, but there is no specific recommended method in Canada for DDWS. Unlike in municipal systems, in DDWS, lead usually originates from premise plumbing such as fittings, solder and faucets, and its release is influenced by many factors such as the water chemistry. Our research team partnered with the Nova Scotia Department of Natural Resources and Renewables to conduct a study on two potentially problematic provincial park campgrounds in Nova Scotia using water quality data from previous years and new data collected in the field by the research team. The objective was to identify the source of lead and find potential solutions. Water samples were collected using methods such as the 6 hour stagnation (6HS). The results of water analysis showed that the men’s bathroom at park 1 was the most problematic, with high levels of copper, zinc and lead, suggesting corrosion of brass plumbing components. Additionally, the sampling methods were compared to each other to find out which ones were redundant. Making sure that water in DDWS is safe for consumption is essential to protect public health and aligns with the UN's Clear Water and Sanitation goal.

Reetika Ali

Program: Research Assistant

Supervisor: Marina Freire-Gormaly

Abstract: Rapid Groundwater Potential Mapping of the Ban Saka area of Laos using GIS and RS tools and Analytic hierarchy process (AHP) techniques

Over 40% of the global population does not have access to sufficient clean water. By 2025, 1.8 billion people will be living in countries or regions with absolute water scarcity, according to UN-Water. Laos is a nation with plentiful surface water and broad rivers, but there is little infrastructure to make that water clean and accessible outside of cities. Daily use and emergency responses in humanitarian contexts require a rapid setup of water supply. Boreholes are often drilled where the needs are highest and not where hydrogeological conditions are most favorable. The Rapid Groundwater Potential Mapping (RGWPM) methodology was therefore developed as a practical tool to support borehole siting when time is critical, allowing strategic planning of geophysical campaigns. RGWPM is based on the combined analysis of satellite images, digital elevation models, rainfall, and geological maps, obtained through spatial overlay of some hydrogeological variables controlling groundwater potential: Drainage Density, Rainfall, Slope, Soil type, Geology, Lineament density, Land use and land cover (LULC). Drainage Density controls the runoff distribution and infiltration rates, Rainfall is the major source of water, LULC affects the recharge processes, Slope drives the water flow energy, Soil type governs the infiltration rates, Geology controls infiltration, movement, and storage of water and Lineament density determines the hydraulic conductivity. In this work, RGWPM maps were produced performing a study using Multiple Criteria Decision-Making methods. Analytic hierarchy process (AHP) techniques were used for delineation of Groundwater potential zoning of the Ban Saka area in the Phonghong district of Laos using GIS and Remote Sensing techniques through a weighted overlay of these seven factors with the overall groundwater potential (GWP) characterized as ‘very low’, ‘low’, ‘moderate’, and ‘high’, with each zone associated to a specific water supply option. As groundwater is the most reliable source of drinking water, in Laos RGWPM is used to provide a safe source of potable water.

Shafin Mahmud

Program: LURA

Supervisor: Magdalena Krol

Abstract: Visualizing Subsurface Contaminant Transport with Web-based Course Tools

According to the United Nations, groundwater provides drinking water to at least half of the world's population and accounts for 43 percent of all irrigation water. Groundwater contamination occurs when human activity results in harmful substances entering the subsurface through spills or improper management, making groundwater unsafe for use. The recent increase in groundwater contamination and the serious health effects has caused a dramatic increase in studies exploring the principles of contaminant transport which are taught at various universities and colleges. This research focuses on developing web-based course tools using programming languages such as HTML, CSS, and JavaScript to provide graphical representations of many mechanisms of subsurface contamination transport. Debugging and testing are also necessary for quality assurance purposes. The tool will also allow the user to study the graphs under varying conditions. The main objective of this research is to develop a framework that can be used to teach several fundamental environmental engineering courses and conduct additional research on contaminant transport by analyzing the graphs to design effective mitigation techniques.Read More

Kyle Baird

Program: LURA

Supervisor: Stephanie Gora

Abstract: 3D Printed Proportional Water Sampling Device, to Help Detect Chemical Contaminants in Decentralized Drinking Water Systems

Each year there are countless health issues associated with contaminated drinking water. In an effort to combat these issues, research into a non-invasive 3D printed water sampling device began. There are many different types of water sampling that can be done, however, proportional sampling is the method chosen for this research due to a distinct advantage which is, researchers can then see how much chemical contaminants consumers are exposed to over a set time frame. Having a 3D printed sampling device would make it easier to do proportional sampling, because this device was built in a modular way it can be attached to any drinking water system to easily provide quality water samples. The modular design of the sampling device also allows for specific components to be easily replaced if needed, thus, making the device user-friendly with a wide variety of applications due to the versatility of the overall design. -

UN SDG 7: Affordable & Clean Energy

Akshay Karthiyayini Kelappan, Niloy Sen and Sheel Bhadra

Program: Mitacs Globalink

Supervisor: Paul G. O'Brien

Abstract: Design and Demonstration of a Photovoltaic-thermal Solar Water Heating System

High temperatures have a detrimental effect on the performance of photovoltaic (PV) cells because of their negative temperature coefficients. The performance of PV cells can be enhanced by keeping PV cells cool while they are exposed to sunlight. This study proposes a novel PV-water-Trombe wall, wherein water is used as a thermal energy storage medium. Hot water and air at the top of the Trombe wall can be used for indoor air and water heating in buildings. Further, PV cells are placed at the bottom half of the Trombe wall, where the relatively cold water at the bottom of the thermal energy storage medium keeps them cool. Moreover, tinted acrylic sheets can be placed in the water wall to increase the amount of solar thermal energy generated and stored in the water. Using tinted sheets also allows for the Trombe wall to be semi-transparent, which can be desirable for improved building aesthetics. In this work, a PV-water-Trombe wall prototype (with and without an acrylic tinted sheet) was tested under solar-simulated light for its ability to generate both electricity and heat simultaneously. The temperature profile of the experimental setup was tracked using thermocouples placed at various places, and the output power from the PV cells was measured. CFD simulations will also be performed and the numerical results will be compared with the experimental results. The results demonstrate that the Trombe wall prototype can provide air up to 70 °C and water above 40 °C while keeping the temperature of the PV cell at lower values. The experimental results attained thus far suggest that the PV-water-Trombe walls can improve building energy performance by generating electric power and by reducing building heating loads.

Jack McArdle

Program: Mitacs Globalink

Supervisor: Ahmed Elkholy and Roger Kempers

Profile: Jack is a final year aerospace engineering student at Durham University (UK) currently undertaking the Mitacs Globalink Programme. Jack is passionate about working on projects related to heat transfer.

Abstract: Spherical Re-entrant Cavities for Pool Boiling Enhancement using SLM

Pool boiling cooling systems have been widely applied in several industries, such as immersive cooling in electronics and aerospace, owing to their high heat transfer coefficient (HTC) and increased critical heat flux (CHF). The current work proposes using the laser powder bed fusion (LPBF) technique to fabricate spherical re-entrant cavities that are not amenable to fabrication using conventional machining. Re-entrant cavities are spherical cavities embedded within the sample with an opening (pore) to the boiling surface. This opening has a diameter less than the cavity diameter, which helps trap the vapour and promotes nucleation activity. Using Novec 7100/ethanol at saturated conditions, the CHF and HTC were tested using a world-leading pool boiling apparatus. It is expected that the porous nature of the LPBF facilitates the bubble generation through the pores and wicks the replenishment liquid to the hot spots through the internal arteries, which would increase the CHF and HTC simultaneously. The bubble dynamics will be investigated using a high-speed camera to understand the underlying heat transfer enhancement mechanism.

Mahmoud Al Akchar

Program: LURA

Supervisor: Roger Kempers

Profile: Mahmoud is interested in thermal applications and cooling. His capstone project was on exhaust heat recycling of trucks to improve fuel efficiency under the supervision of Dr. Kempers. He decided to continue his research with Dr. Kempers as he plans to pursue a Master’s degree in the field.

Abstract: Single-Phase Grip Metal Cold Plate Technology for IGBT Modules Cooling Applications

This work investigates a Grip Metal cold plate, a new type of cold plate hooks technology against other more common cold plate technologies for IGBT cooling. Insulated Gate Bipolar Transistor (IGBT) modules are used in electric power converters and inverters. One major mode of failure is thermal stress. High temperature causes packaging and cable failure. Active and passive heating and cooling of the IGBT junctions cause temperature and thermal cycling. The long-term degradation will cause sudden breakoffs and downtimes which require unscheduled maintenance. Therefore, advancement in IGBT cooling is necessary to save cost, and time, and push the hardware to its maximum efficiencies. A skiving process introduced by NUCAP, manufacturing an array of hook-shaped to increase surface area. To improve the cooling performance of cold plates, this new technology will facilitate fluid mixing and promote boundary layer separation. A single-phase testing apparatus is built to evaluate the cold plates under variable flow rates. The heat resistance of different cold plates will be calculated and compared. It is expected that the Grip Metal cold plate will have a lower heat resistance than the others and therefore a better thermal cooling performance.

Maria Beshara

Program: USRA

Supervisor: John Lam

Profile: Maria is currently working towards her degree in electrical engineering and is working on GaN based bidirectional power interface. Her research interests include power optimization, renewable energy systems, and environmental bio-energy harvesting.

Abstract: Gallium-Nitride Based Multi-MHz Bidirectional Power Interface for Integrated Energy Storage in a DC Microgrid

The Canadian data centre and wireless communication market currently worth more than $5 billion and is forecasted to grow over 10% every year. Using renewable energy sources for powering data centres and servers can provide a clean solution to manage the overall electricity consumption. Since most of the electrical components in a data centre, such as batteries for providing back-up power, require DC power, high voltage DC distribution is an emerging power architecture. Existing power interface that converts the surplus energy captured from the renewable source to the storage element (e.g. a battery) consists of two conversion stages: one stage interfaces with the renewable source and the second stage interfaces with the storage element. This approach results in low efficiency and high system cost. This project is to develop a single-stage bi-directional AC/DC converter that stores extra extracted power or deliver required power directly, unlike the conventional design approach. The proposed power interface operates in two different modes: step-down (called buck mode) to store energy and step-up (called boost mode) to deliver energy from the battery. To reduce the size of the proposed circuit, Gallium Nitride switching devices with very small footprints are used in the devised circuit. To minimize the size of the magnetic components in the circuit, the proposed circuit operates at a very high operating frequency in MHz range. Simulation and theoretical analysis on the proposed circuit with a rated power of 300W are performed in Powersim and MATLAB to study the converter’s operating principles and power losses. Results anticipated from this work is that the power conversion efficiency will increase by at least 5 – 10% compared to the existing design.

Walid Orabi

Program: LURA

Supervisor: Roger Kempers

Profile: Walid is pursuing a mechanical engineering degree at Lassonde. His research revolves around the topology optimization of heat sinks. He will be applying this technology to the heat transfer fluid flow field

Abstract: Topology Optimization of Liquid Cooled Heatsinks

Topology optimization has been gaining popularity in creating non-intuitive designs for managing thermal heatsinks; these designs are difficult to create with traditional optimization methods. Three-dimensional topology optimization is computationally expensive; therefore, various techniques have been used to simplify this into a two-dimensional problem. In this work, topology optimization of a 2D water-cooled heat sink is carried out using the density-based approach. The energy and flow fields are solved using COMSOL Multiphysics. The optimization objective is increasing the mean temperature of the fluid leaving the heat sink at a given heat flux and pressure drop. Globally Convergent Method of Moving Asymptotes (GCMMA) built in COMSOL was employed to reach the optimization solution. COMSOL’s built-in adjoint solver and sensitivity analysis are used to guide the optimization. It is found that the prescribed tuning parameters significantly influence the obtained design which requires further investigation. Also, several parameters, such as the heat flux, pressure drop, and design domain size will also be investigated.

Jaiden Fairclough

Program: RAY

Supervisor: Kamelia Atefi Monfared

Profile: Jaiden is a 2nd year Civil Engineering student. as a RAY researcher, he is exploring his interests in geothermal energy by working with Prof. Monfared.

Abstract: Geothermal Energy Piles

Geothermal energy piles are dual structural elements where in addition to the load bearing role as the foundation, they can be utilized to provide clean heating or cooling energy using the sustainable geothermal energy. During construction of energy pile foundations, pipes normally made of high density polyethylene are added to the interior of the foundation. These pipes carry a working liquid that act as a heating exchanger, heating up or cooling down the pile, thus cooling down or warming up respectively. Through this system heating or cooling can be provided through green renewable energy. There are still knowledge gaps regarding the optimum thermo-mechanical performance of energy piles for different environments and ground conditions. This project evaluates the use of energy piles for the Canadian environment. In this project we develop a numerical model using the COMSOL Multiphysics to replicate concrete cylinders of various dimensions, soil types and various configurations for the tubing system inside the pile. One key challenge found is the efficiency of this system. The findings will help determine the optimum configuration for the energy pile for the Canadian Environment.

Rehan Rashid

Program: LURA

Supervisor: Roger Kempers

Profile: Rehan is a 4th-year Mechanical Engineering student. His passion for research stemmed from his curiosity about the unknown, and this has pushed him to explore different fields of engineering.

Abstract: Experimental Study and Performance Characterization of High-Performing Microchannel Heat Exchangers for Hypersonic Flow

Microchannel technology can be incorporated into heat exchanger designs, for improved thermal performance and to accommodate mass and volume reduction for space hardware applications. This study details the design, fabrication, and commissioning of an experimental test loop used to characterize the thermal and hydraulic performance of the microchannel heat exchangers used in electric vehicle (EV) battery management, electronics cooling, and waste heat recovery. The experimental test loop used to characterize the microchannel heat exchanger was designed with a regulator, a 10-micron particle air filter, a flow rotameter, a variable alternating current (AC) transformer coupled with a heater, as well as the microchannel plate heat exchanger. Pressure drops were measured from differential pressure transducers at the respective cold side and hot side of the heat exchanger. The temperature was logged using the logarithmic mean temperature difference (LMTD) across the ports of the heat exchanger. These values alongside the input power were used to characterize the performance of the microchannel heat exchanger. The primary fluid used in this investigation was nitrogen (N2), however initial test runs were conducted with compressed air. The microchannel heat exchanger used for our experiment is made from stainless-steel and manufactured through diffusion bonding and chemically etching processes. This research study provides a better method to characterize microchannel heat exchangers and allows us to determine the heat exchanger efficiency, heat exchanger effectiveness, fin efficiency, as well as the overall heat transfer rate.

Bridget Price

Program: Mitacs Globalink

Supervisor: Satinder Kaur Brar

Profile: Bridget is a visiting researcher working in Dr. Brar's lab through the Fulbright Canada Mitacs Globalink Program. She is a fourth-year student at Oregon State University double majoring in chemical engineering and bioresource research. Her research interests are in microbiology and engineering, particularly in biofuel production.

Abstract: Fed-batch Cultivation as a Bioprocess Tool to Increase Lipid Accumulation and Carotenoid production by Rhodosporidim toruloides

Microbial lipids are a promising alternative oil feedstock for the production of biofuels, food additives, pharmaceuticals, and chemicals. In batch cultures, the oleaginous yeast Rhodosporidim toruloides is capable of accumulating up to 70% of its dry cell weight as lipids. In addition to microbial lipid accumulation, R. torulides has the capacity to produce valuable compounds like carotenoids. R. toruloides was cultivated under fed-batch conditions as a tool to increase lipid accumulation and lipid productivity. Fed-batch cultures were run for 168 h at a bench-scale bioreactor using synthetic media. Maintaining glucose concentration at 10 g/L every 24 h, a total biomass and lipid content of 14.15 g/L and 42.4% (w/w) were obtained respectively. The highest biomass productivity was observed at 32 h while lipid productivity was at 56 h. Finally, the fatty acids present were stearic, palmitic, and oleic fatty acids. This study show the fed-batch fermentation process is an excellent tool to improve lipid accumulation and carotenoid production using R. toruloides.

Himanshu Mishra

Program: Mitacs Globalink

Supervisor: Cuiying Jian

Profile: Himanshu is a third year undergraduate student from the Department of Mechanical Engineering at Indian Institute of Technology, Kanpur. Himanshu is working with supervisor Prof. Cuiying Jian on the application of Gryffin Algorithm and aims to be an expert in the field of Machine learning.

Abstract: Application of Gryffin in the Design of Solar Cells

The data-driven design strategies for autonomous experimentation have mostly concentrated on continuous process parameters, despite the urgent need to provide effective techniques for selecting categorical variables. For instance, to design hybrid organic-inorganic perovskites for light harvesting, exploring categorical variables, i.e., organic molecules, halide anions, and cations, is of great importance to determine the optimal compositions for the required bandgap. This research employs the Gryffin algorithm to search categorical variables and further pinpoint materials candidates that can optimize the requested bandgap. The Gryffin algorithm augments a Bayesian optimisation by using kernel density estimation directly on the categorical variables. In addition, it can rank the importance of the properties of each categorical variable by converting properties into descriptors. The Gryffin algorithm was first used to explore hybrid organic-inorganic perovskites to search materials candidates with the lowest bandgap. The descriptor generation process was modified to take various properties into considerations, including electron affinity, mass, and electronegativity for inorganic cations and anions, and energy levels, dipole moment, and molecular weights for organic compounds. It was found that molecular weights have the most relevance for organic molecules, whereas electronegativity has the most relevance for cations and anions. Following this, the Gryffin algorithm was also employed to explore inorganic solids of diverse elements and proportions to aid the design of inorganic solar cells. Properties of each element, such as atomic numbers, masses, and group numbers, will be ranked in relation to bandgaps. It is expected that key information on property importance can provide crucial knowledge and insights to guide the development of photovoltaic devices.

Hira Memon

Program: Mitacs Globalink

Supervisor: Amir Asif

Abstract: Cost Optimization Model of Electric Vehicles Parking Lots for Distribution System Operator

During the past few years, greenhouse gas emissions increased exponentially. According to the International Energy Agency report, 32.3 Giga metric tons of CO2 had been emitted in 2016, where the transportation sector stands among the major contributors. Hence, the penetration of Electric Vehicles is considered the prime action toward a sustainable energy environment. The growth and accessibility of charging infrastructure to meet the charging demands of EV owners are vital for the consistent adoption of EVs. A considerable need for EV charging comes from EVs parked in commercial and workspaces. Additionally, the energy demand will likely increase by 56% in 30 years starting in 2010 due to economic development, according to the US Energy Information Administration (EIA) assessment. Regardless, the grid is designed initially to bear lower loads. Thus upon the introduction of this extra load, the grid requires some additional infrastructure and research to protect it from potential threats. The Vehicle-to-Grid solution is currently under research to solve this problem.

The proposed model offers a strategy for optimizing the distribution system operator's profit while considering EVPL owner's yields and EV user satisfaction. Additionally, the model decides on the optimal combination of uni-directional (UD) and bi-directional (BD) chargers, considering all the financial limitations of the vehicle to grid (V2G) services and the incentive-participation modeling of EV users.Read More

Rayyan Al-Kadri

Program: LURA

Supervisor: Roger Kempers

Profile: Rayyan is interested in finding and studying ways to improve current technologies in Mechanical Engineering and enjoys experimentation.

Abstract: Development of 3D-printed wick structures

Two-phase cooling devices (i.e. vapour chambers (VCs) and heat pipes (Hps)) have recently gained attention in the microelectronic industry due to their ability to meet the increasingly higher heat flux requirement as compared to traditional single-phase heatsinks. The wick is an essential component HPs and VCs, which drives the working liquid from the condenser side (cold side) back to the evaporator (hot side) through its capillaries. Therefore, the wick should be engineered to have higher permeability (K) and a smaller effective pore radius (reff). As a result, various techniques and manufacturing methods, such as etching, sintering, machining, etc., have been developed to balance the trade-off between both parameters. The current paper proposes using laser bed powder fusion (LBPF) technology to tailor complex porous wicking structures. Several strut-based unit cells (BCC, FCC, FL, etc.) structures were developed and investigated at different porosity levels. The hydraulic performance parameters of the wicks were tested using the rate-of-rise (m-t) method. It was found that the additively manufactured wicks showed a higher K/reff compared with the conventional sintered wicks. -

UN SDG 9: Innovation in 3D Printing & Smart Materials

Steeve-Johan Otoka-Eyota, Yuyu Ren

Program: LURA

Supervisor: Solomon Boakye-Yiadom

Abstract: Defect Detection During Metal 3D printing Using Supervised Machine Learning

Metal-based additive manufacturing (Metal AM), also known as Metal 3D printing, is the process of making 3D objects by building multiple layers of fine metal powder. There are different types of Metal AM, but our research focuses on the Laser-Powder-Bed Fusion (LPBF) technique. The main advantage of using LPBF Metal AM is its ability to create flexible, lightweight structures with complex geometric shapes in a shorter time. However, the produced parts contain defects and discontinuities caused by many factors. The lack of performance data, improvement standards and consensus on the fabricated part properties has impeded the application of Metal AM in the industry. Moreover, the absence of fundamental data required for developing sophisticated artificial intelligence and machine learning algorithms has prevented realizing this goal. The first objective of this research is to monitor and track the melt pool morphological evolution, the ejection spatters behaviour, and the un-melted and over-melted regions during the rapid processing of parts. This involves the collection and best pre-processing techniques of multi-sensor data for accurate dimensionality reduction. The main goal is to develop advanced machine learning algorithms that can predict defect generation during Metal AM. In the interest of gathering data, we have recorded an experiment using a high-speed camera and developed two different applications in Java and Python, generating two sets of data. Using a multitude of image processing techniques, we achieved generating data by studying the melt pool and the ejection spatters. The data generated are automatically stored in a file, ready for cleaning and developing new algorithms to detect defects in metal 3D printing and ultimately improve the printing quality.

Yassine Turki

Program: Mitacs Globalink

Supervisor: Reza Rizvi

Profile: Yassine is a Mitacs Globalink student from Tunisia, working in Lassonde under the supervision of professor Reza Rizvi. Yassine's specialty is computer system engineering.

Abstract: Performing Hardness Test Using Fully Automated Three-Dimensional Printer

Several methods and tests have been conducted or carried out to investigate the mechanical behaviour of polymers. These tests require gathering a huge amount of data with good precision. Accuracy, time, and money constraints differ from one test to another. These constraints can easily limit the progress of a project in any field. The hardness test, for example, is considered the most suitable test among other tests in terms of these constraints mentioned. Hardness is defined as the resistance of a material to penetration, and the majority of commercial hardness meters force a small penetrator (indenter) into the metal by means of an applied load. Experiments will be done on thin and small material and the appropriate indenter needs to be built taking into consideration its shape. Since 3D printing and rapid prototypic has a greater impact on modern manufacturing practices, the objective of this project is to have a fully automated three-dimension printer that can perform printing as well as hardness test. Therefore, the microcontroller used in this research work is Mega Arduino and it will be responsible for controlling 5 stepper motors: x-axis, y-axis, z-axis, indenter, and nozzle. Four of these motors have already been installed in the 3d printer, the fifth motor is a linear actuator that will control the indenter to go up and down.

Madison Bardoel

Program: LURA

Supervisor: Roger Kempers

Profile: Madison is a 5th-year mechanical engineering student and this is her second time participating in the Lassonde Undergraduate Summer Research Conference. She had an amazing experience last year at the conference and wanted to continue her heat transfer-related research again this summer. She chose research because she is enthusiastic about getting hands-on experience in her field, and further developing her engineering knowledge.

Abstract: Two-Phase Loop Thermosyphon with Porous Evaporators Fabricated by Laser Powder Bed Fusion

Single-phase cooling methods have become insufficient for the high heat fluxes generated by increasingly miniaturized electronic components. Two-phase loop thermosyphons provide an attractive solution for transferring heat at relatively small temperature differences between consecutive boiling and condensation stages. The two-phase loop thermosyphon in the present study consists of an additively manufactured (AM) side-heated evaporator and plate heat exchanger condenser connected by transparent nylon tubing. Input thermal power converts the working fluid to vapour in the evaporator. The vapour flows upward through the riser tube to the condenser where it is condensed by a cooling loop. The condensed fluid returns to the evaporator under gravity in the downcomer tube. There have been extensive previous studies to enhance thermosyphon performance by modifying the evaporator surface topology through techniques such as powder sintering, coating, milling, etc., but not AM methods such as Laser Powder Bed Fusion (LPBF). The LPBF process produces small-scale surface deformations that work as stable nucleation locations for boiling and assist liquid replenishment. In the present study, three evaporators with different porosities were manufactured by LPBF and were investigated for their effect on thermal resistance at varying fill ratios and input powers. Computer simulations are used to analyze the conductive and convective heat transfer mechanisms within the evaporator. It is anticipated that this modification will enhance the loop performance and mitigate temperature instabilities. Electronics, solar, air conditioning, and avionics systems and applications will benefit from these enhanced cooling technologies, which will improve their overall performance and safety.

Jatin Chhabra

Program: LURA

Supervisor: Cuiying Jian

Abstract: Fracture Point Detection and Prediction in Graphene through Machine Learning Algorithms

Among all 2-D materials, graphene has received enormous research attention in the last decade. It is the strongest material of all known to mankind. Due to the material being relatively new, it is vital that we research further about its material properties and harness its full potential which ranges from wastewater treatment to material for space exploration. The purpose of this research is to dive deep into the characteristic behaviour of graphene but with a faster and cost-efficient approach using machine learning (ML) algorithms. The primary objective is to detect fracture points in the sheet of graphene with defect ratios (1% to 10%), stretched under different trajectories. The trajectories consist of a large dataset signifying the direction of the stretch and the time-dependent coordinates of all the atoms undergoing an applied strain rate of 0.001m/s at 300K, obtained from molecular dynamics simulations. This detection can be potentially achieved by an anomaly detection algorithm that emphasizes on detecting outliers in the given trajectory data at every time step. The goal is to identify the abnormality and pinpoint the earliest occurrence to detect the first fracture point. The secondary objective is to input the fracture point detection data into a neural network and predict the fracture points for new graphene samples with other defect ratios. To fulfil this goal, two different neural networks from research papers were referred to validate our conclusions of young’s modulus and fracture stress under the above-mentioned temperature and strain rate. The analyzed code and derived observations provided a deep understanding of the architecture of neural networks and helped to lay the groundwork for our neural network. The future of graphene is promising. This innovative approach of integrating ML to investigate more about materials can deepen our understanding and accelerate the materials design process.

Josue Montero

Program: LURA

Supervisor: Roger Kempers

Abstract: Characterization OF Interface Materials

My research is to aid in a project involving the characterization of interface materials. The device will take the measurements of thermal resistance, effective thermal conductivity, and electrical resistance as a function of pressure and the thickness of the material. As electronic devices become more compact and the demand for their sophistication increases, it is more difficult to reduce their operating temperature, which results in a shorter lifespan of the devices. TIMs (Thermal interface materials) eliminate the void between interface areas of electronic components in contact to maximize heat transfer and dissipation. The precision of current TIM testers is comparatively less sophisticated, so there exists a need for a more sophisticated testing device that can accurately measure the quality of TIMs.

Vennesa Weedmark

Program: LURA

Supervisor: Isaac B. Smith

Profile: Vennesa is a 4th-year Computer Science student who is interested in computational modeling, machine learning, and Mars.

Abstract: Seasonal variability of the Southern Polar Layered Deposits of Mars during surface observations and numerical simulations

The southern polar layered deposits (SPLD) of Mars are series of troughs, 3-4 km deep, consisting primarily of water ice and dust and formed from erosion and deposition of ice and dust. Seasonally, during southern spring, fast moving surface winds known as katabatic winds are accelerated downwards into the trough and erode the surface material in the process. Near the bottom of the trough, the katabatic wind layer rapidly expands, known as a katabatic jump, and optically thick, low-altitude clouds are formed because of significant changes in the local pressure. These clouds snow the eroded material back onto the surface and show the movement of surface material throughout the polar region.

For the first part project, I analyzed ~5000 visual band images from the Thermal Emission Imaging System (THEMIS) to statistically determined the seasonal variability of surface clouds for several Martian seasons. THEMIS has a narrow field of view and can easily resolve clouds on 2-3 km scale and has coverage from early spring to late summer. My analysis match that of previously published observations from Professor Isaac Smith, and I was also able to update our current database of trough clouds by including the most recent Martian season. I found that the katabatic clouds are most common during mid-to-late spring, when the katabatic winds are at their strongest and quickly die out towards the end of the spring.

For the second part of the project, I converted a hydraulic jump MATLAB model to a Python. Hydraulic jumps are a terrestrial phenomenon that are similar to the Martian katabatic jumps that are responsible for trough formation. The model was modified to work with a 3-dimensional rotating reference frame and with Martian conditions, allowing us to investigate near-surface polar aeolian processes which act to influence polar topographical change.

The resultant model will also contribute to the development of a wider computational mesoscale model of the Martian atmosphere as this model can quickly test new physics compared to computational heavy models.

Mark Vertlib

Program: LURA

Supervisor: Regina Lee

Profile: Mark completed his BCom at York University in 2021 and entered the Physics and Astronomy (Space Science) program the following Fall term. He is researching RSO light curve analysis and characterization at the Nanosatellite Research Laboratory. His interests include RPGs, Sci-Fi novels, and board games.

Abstract: Improving the Satellite Facet Modelling Process for More Accurate Simulated Light Curve Analysis

Identification of Resident Space Objects (RSOs) from the ground is a crucial step to properly forecasting conditions in Earth orbit. One method being explored is identifying an RSO from a plot of its brightness variation over time (referred to as its light curve), which is directly related to its geometry, surface properties, orbit, and attitude. It is currently not feasible to train an Artificial Intelligence to characterize or identify an RSO from the light curve alone, as there is a limited set of labeled training data, so the need has arisen for simulated light curves to fill the gap. A current bottleneck to this process is the time it takes to create each 3D model for simulation (referred to as a facet model) – the process is complicated and limited in scale, taking 2-5 hours of effort depending on the RSO. In this research we have developed a GUI-based application to improve the efficiency of the facet modeling process and expand its capabilities, bringing the modeling time of an RSO to an hour or less so that the labeled dataset can be created. With the added functionality and intuitive interface provided by the application, it is now possible to create many more accurate facet models for simulation, and the process can be worked on in parallel by several researchers with minimal time needed for training. Satellites like Intelsat 10-02, Jason-2, and Radarsat-2 have been modelled using this technique, producing a variety of facet models with their solar panels tilted at various angles to provide a diverse range of light curves for each one.

Matias Quirola

Program: LURA

Supervisor: Isaac B Smith

Profile: As a Physics & Astronomy student, Matias has always been passionate about the Universe, the stars, and specifically our neighbouring planets. His research focus is on mapping the layers of the north polar cap of Mars, using radar-sounding data from the Shallow Radar instrument.

Abstract: Interpreting Layers of the North Polar Ice Cap of Mars for a Climate Signal

The North Polar Region of Mars is characterized by spiral troughs in the morphology and stratigraphy of its surface. Part of what the Planum Boreum consists of is the NPLD or North Polar Layer deposits, a combination of regions of sand, dust, and ice which are the evidence of possible erosion and deposition or trough migration due to climatic processes, as well as cyclical variations in the orbit and rotation that have shaped this Martian region and its evolution, giving an insight of the climatic history of the Planet. With radar sounding data acquired with the Shallow Radar Instrument (SHARAD), it is possible to detect the reflection caused between the layers of the Martian Polar Cap, product of the dielectric properties of the NPLD. By studying these layers, it is possible to detect the trough migration path, which has been mapped with industry software, using the data mentioned before in order to acquire a 3D cross section of the different layers, in which the possibility of trough migration, deposition, as well as the unconformities and wind patterns are evidenced along the trace of the stratigraphic reflector. These reflectors are divided into their respective regions in the NPLD, and a horizon is created for the reflector that is analyzed with radar mapping software. For this project, the reflector R15 has been chosen, since the trough migration path as well as several unconformities can be easily observed as part of this horizon. It is necessary to understand that the unconformities correspond to a certain erosion of the layer, in which later on more material was deposited on top, forming a space between two sections of the same initial layer, called the unconformity. Due to this division, it is possible to observe a trough migration path, in which the trough can be seen initially as part of the layer that has eroded, and then later be seen after the unconformity. By studying these paths, the objective will be to understand the formation of these troughs and finding the presence of the unconformities, as well as the impact of the climatic processes and the wind patterns in the NPLD, affecting the region by erosion or deposition, performing this analysis for the first time with three-dimensional data, with the possibility of comparing these new findings with respect to the available 2D data, resulting in new interpretations or confirming previous theories.

Aiden Weatherbee

Program: LURA

Supervisor: George Zhu

Profile: Aiden is a 4th year engineering Student. He joined Dr. Zhu’s summer research project as it aligns with his research interests of developing and working with AI, robotics, and space technology.

Abstract: Robotic Capture and Collision Avoidance in Simulated Space Environment

Space exploration relies heavily on the development of artificial intelligence (AI) for in-orbit robotic capabilities. Specifically, the capturing, manipulation and collision avoidance of spacecraft are key enablers for common tasks such as satellite maintenance, docking, or orbital debris removal. Until recently, most of the notable maintenance tasks have been performed by astronaut extravehicular activities, which are very risky by nature. There has been little to no orbital debris removal and an exponential increase in the number of spacecraft in orbit. This research project focuses on developing the fundamental aspects needed for these common tasks in space exploration with the use of machine learning, reinforcement learning, and related techniques. Through a series of experiments, we plan to replicate some of the challenges and conditions that AI may face in the typical space environment. This includes factors such as the limited power and computing capabilities that are available in satellites, or how the high reflectivity of spacecraft can affect 3D interpolation capabilities. The project has also focused on AI-enhanced collision avoidance and path planning that will be tested within these experiments. This will mainly be executed through the industry's leading software library, ROS (Robot Operating System), as it is highly compatible and can be easily applied in the space industry.

Yanchun Fu

Program: LURA

Supervisor: Regina Lee

Profile: Yanchun is a Space Engineering student conducting research on developing a payload for the upcoming RSOnar mission. He is currently developing methods to identify RSOs, and interpolate their attitude profiles from image sequences. He has a great interest in remote sensing and Space Situational Awareness (SSA).

Abstract: Demonstrating Feasibility of Integrated RSO Detection and Tracking Workflow With Star-Tracker Grade Sensors and Particle Swarm Optimization Simulator

The rapid increase in Residential Space Objects (RSO) has posed challenges to space safety. Various methods of detecting and tracking RSOs have met limited success in creating effective workflow in the automatic RSO identification process. In August 2022, the RSOnar team at York is launching a payload onboard Stratos balloon to demonstrate the feasibility of using star-trackers in RSO detection and tracking. This research focuses on supporting the RSOnar mission through optimizing payload assembly, as well as calibrating the Space-Based Optical Image Simulator (SBOIS) to analyze light curves for attitude determination.

To optimize the RSOnar design for assembly, different orders of assembly and connector/bracket placements were tested to discover their most optimal arrangements without altering structural properties. In calibrating SBOIS, I used the extracted light curves of NEOSSat from my 2021 LURA project as inputs. Since we possess its real attitude profiles, using these light curves greatly simplifies the process of determining the accuracy of simulation results.

With multiple fit tests, my colleagues and I have finalized the frame design of RSOnar and optimized it for easy assembly for hardware debugging purposes. Assembly instructions were also made for rapid disassembly and assembly for debugging. For SBOIS, possible improvements were identified and implemented within its optical swarm optimization. The simulator can now reproduce light curves and attitudes much like real ones inputted, demonstrating the feasibility of reconstructing attitude profiles. With the launch of RSOnar and calibration of SBOIS, we are one step closer to creating an integrated automatic RSO detection and tracking workflow that could be used widely, improving space situational awareness.

Sabrina Porrovecchio

Program: LURA

Supervisor: Isaac B. Smith

Profile: Sabrina is a Space Engineering Student entering her fourth year. With the goal of working in the research industry in the field of Earth and space sciences, LURA/USRA has given her an opportunity to assist in the research of mapping water ice depths on the Martian subsurface.

Abstract: Identifying Subsurface Ice in Phlegra Montes, Mars, Using the Mars Reconnaissance Orbiter’s Shallow Radar (SHARAD)

The Martian surface and subsurface are confirmed to have abundant water ice at the poles and mid-latitudes. Evidence from radar, thermal, and imagery data suggests the presence of shallow ground ice and the existence of debris-covered glaciers. This project aims to characterize ground ice and glaciers in Phlegra Montes, a chain of massifs in the mid-latitudes of Mars (30°- 50° N) with many periglacial and glacial features. Of importance, the southern portion of Phlegra Montes has been suggested as a possible landing site for the first human exploration as accessible water ice and solar energy (from low latitudes) are significant requirements for local resources. This study aims to support the mapping of water ice by identifying areas and features within Phlegra Montes that exhibit geomorphological features consistent with shallow ground ice and glaciers. A combination of orbital radar and image data from the SHARAD (SHAllow RADar) and Context Imager (CTX) instruments on NASA’s Mars Reconnaissance Orbiter. Using two geospatial software platforms: JMARS and SeisWare, reflections are identified when compared to clutter simulations and then mapped on the SeisWare platform to showcase ice depths throughout areas of particular interest. These areas include the Northeastern Lobate Debris Apron and LDA 1694 (inside the mountain chain). Post-mapping of these regions shows a high probability of shallow ground ice at sites where flow features can be identified using CTX imagery in JMARS. The results of this investigation will contribute to a better understanding of the ice distribution at Phlegra Montes and may support the selection of a landing/colony site there. The project may also provide targets of interest for the International Mars Ice Mapper (I-MIM) Mission.

Chakka Vijaya Aswartha Narayana

Program: Mitacs Globalink

Supervisor: Ping Wang

Abstract: An Efficient Partial Model Training Strategy for Federated Learning with Resource-Constrained Edge Devices

Federated learning is a distributed training strategy that enables collaborative training of multiple edge devices without accessing the data stored in these devices. In this strategy, the edge devices train a local model with the data they have, and these modal parameters are then shared with a server that aggregates all these parameters together to generate a global model. This process is repeated until a threshold performance is achieved. This strategy has helped many apps and products such as Google Gboard which are powered by deep learning in achieving enhanced personalized results. Despite these benefits, the performance of deep learning algorithms suffers in this setting due to the presence of devices having heterogeneous resource constraints. In a real scenario, devices may have different memory and communication bandwidth, leading to overall performance degradation if not addressed appropriately during the aggregation of local models. This research focuses on providing an efficient strategy that generates a personalized subnetwork for each edge device which is in accordance with its resource constraints. The proposed strategy is inspired by already existing ideas of Dropout and DropConnect methods which are often used for addressing the problem of overfitting in neural networks. A comparison of the proposed method with existing strategies on metrics like convergence time, performance, and memory usage will show all the benefits of this strategy over the existing methods.

Shahen Alexanian

Program: USRA

Supervisor: Suprakash Datta

Abstract: An Empirical Examination of Various Reinforcement Learning Algorithms in a Deterministic Environment

Reinforcement learning (RL) is a powerful learning tool that allows a computer agent to use its past experience of rewards received from its actions to formulate an optimal decision-making policy to maximize future rewards. As the use of computers as learning/semi-autonomous agents has increased in recent years, so has the importance of RL in training these agents in contexts where other machine-learning methods are unsuitable.

To provide a controlled and deterministic test environment to test these concepts, the 8-bit video game Arkanoid (a variation on a pinball game) was used in conjunction with the open source Gym AI and Retro testing environments to teach a computer agent to play the game, using only the raw pixel data from the screen display run through a convolutional neural network and a rudimentary reward function based on the agent’s score and remaining lives. In our experiments, the RL agent demonstrated slow but sure progress towards learning how to solve the game.

Recent research have proposed other, potentially more effective approaches to training an RL agent, including use of both full screen and a smaller, “zoomedin” version of the screen centred on the playable character, in parallel; as well as introducing the concept of “regret” to allow the RL agent to recall the negative consequences of specific actions that led to negative outcomes; in order to speed up the convergence of the RL agent to its learning goal. The results of these alternate approaches to training will be examined and any generalizations of these empirical results will be discussed.

Aakash Agarwal

Program: Mitacs Globalink

Supervisor: Ping Wang

Abstract: Caching Strategy based on Deep Reinforcement Learning for IoT networks using Transient Data

In recent years, the Internet of Things (IoT) has grown steadily, and its potentials are now clearer. The primary limitations of these networks are temporary data creation and scarce energy supplies. Apart from this minimum delay and other Quality of Service requirements are still requirements that should be met. While overcoming the unique restrictions of IoT networks, an effective caching policy can assist in meeting the general standards for quality of service. Without requiring any prior knowledge or contextual information, we may create a powerful caching system by utilising deep reinforcement learning (DRL) algorithms. In this work we aim to improve the cache-hit rate and reduce the energy consumption of the IoT network by proposing a DRL based caching scheme. The freshness and the lifetime of the data Is considered. Furthermore, we also consider the size of the data files to be different which is something not yet been explored. This makes our work more suited to the real-life scenario. We suggest a hierarchical architecture to deploy edge caching nodes in IoT networks in order to more accurately capture the regionally distinct popularity distribution. Extensive testing reveals that our suggested solution performs better than the well-known traditional caching policies by significant margins in terms of cache hit rate and energy usage of the IoT networks.

Xuchen Tan

Program: LURA